An article that I co-authored with Chris Wikle has just been published in a Special Issue edited by Alan Gelfand with the journal Spatial Statistics. An arXiv version is here while the reproducible code can be found here. The methodology in this paper is one which I find very exciting — it essentially takes a framework that has recently appeared in Machine Learning and sets it firmly within a hierarchical statistical modelling framework.

The idea behind it all is relatively simple. Spatio-temporal phenomena are generally governed by diffusions and advections that are constantly changing in time and in space. Modelling these spatio-temporally varying dynamics is hard, estimating them is even harder! Recently, de Bézenac et al. published this very insightful paper showing how, using ideas taken from solving the optical flow problem, one can train a convolution neural network that takes as input an image sequence exhibiting the spatio-temporal dynamics, and spouts out a map showing the spatially-varying advection parameters. The idea is simple: Let the CNN see what happened in the past few time instants and it will tell you in which direction things are flowing, and the strength at which they are flowing! They use a convolution neural network (CNN) to make this happen.

Their code was written in PyTorch, but since R has got an awesome TensorFlow API I decided to make my own code using their architecture and see if it works. I honestly am not sure how ‘deconvolution’ works in a CNN (which is part of their architecture), so what I did was implement a version of their CNN where I just go into a low-dimensional space and stay there (I then use basis functions to go back to my original domain). Without going into details, the architecture we ended up with looks like this:

The performance was impressive right from the start… even on a few simple image sequences of spheres moving around a domain we could estimate dynamics really well.

But de Bézenac’s framework has a small problem — it only works for complete images which are perfectly observed. What happens if some of the pixels are missing or the images are noisy? Well, this is where hierarchical statistical models really come in useful. What we did was recast the CNN as a (high-order) state-space model, and run an ensemble Kalman filter using it as my ‘process model.’ The outcome is a framework which allows you to do extremely fast inference of spatio-temporal dynamic systems with little to no parameter estimation! (Disclaimer: when using a CNN that is already trained!)

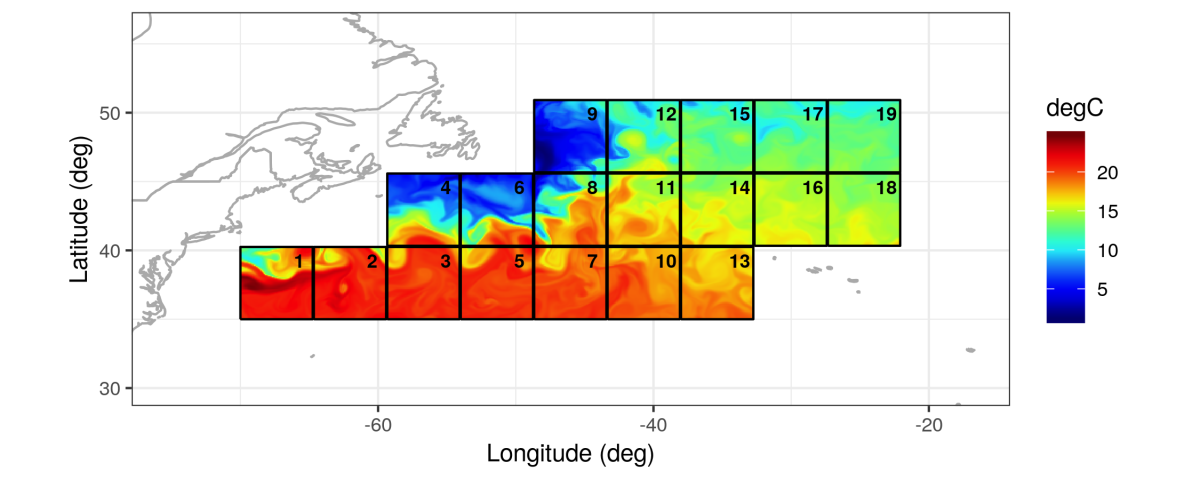

To show the flexibility of this methodology we trained the network on about 80,000 sequences of (complete) images of sea-surface temperature from a publicly available data set (the same data set used by de Bézenac et al., see the image at the top of this page and the video here), and then used it to predict the advection parameters of a Gaussian kernel moving in space (which we just simulated ourselves). The result is impressive — even though none of the training images resemble a Gaussian kernel moving in space, the CNN can tell us where and in which direction ‘stuff’ is moving. The CNN has essentially learnt to understand basic physics from images.

All of this gets a bit more crazy — we used the CNN in combination with an ensemble Kalman filter to nowcast rainfall. Remember that we trained this CNN on sea-surface temperatures! The nowcasts were better in terms of both mean-squared prediction error and continuous-ranked probability score (CRPS) than anything we could throw at it (uncertainties appear to be very well-calibrated with this model). To add icing on the cake, it gave us the nowcast in a split second… Have a look at the article for more details.